If you've ever tried to find an AI model that can generate video with synchronized lip-synced audio from a text prompt, you know the frustration. Platforms like Replicate and FAL AI list dozens of models in broad categories like "image-to-video," but they don't filter for the specific multimodal capabilities that actually matter for your use case.

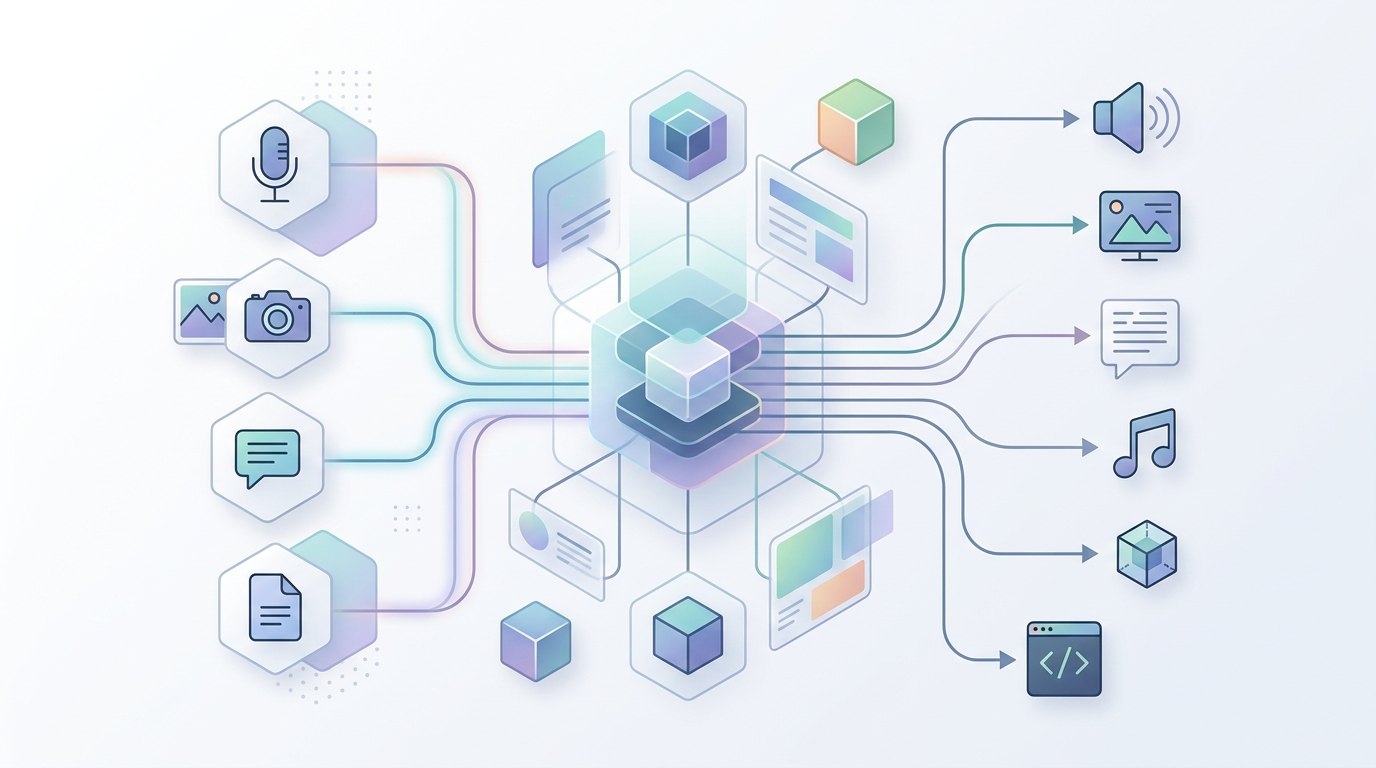

That's why I started building the Multimodal AI Taxonomy — a structured, open-source JSON taxonomy that maps which input modalities can produce which output modalities, with fine-grained distinctions that platforms currently ignore.

What the taxonomy captures

The taxonomy is organized by output type — video, audio, image, text, and 3D — with separate folders for creation versus editing operations. Each modality definition includes the primary and secondary inputs, output characteristics (like whether audio is included and what type), special capabilities like lip sync, and metadata about maturity level, platforms, and example models.

For example, consider a real-world scenario: you want to generate a video of a crowded Jerusalem marketplace with ambient background audio — vendors calling prices, conversation noise. Current platforms don't make it easy to filter for this specific combination. The taxonomy makes these distinctions explicit and queryable.

Current scope

The taxonomy currently covers 22 modality definitions across 5 output categories, with 3 maturity levels (experimental, emerging, mature). Video generation alone has 13 distinct modalities, reflecting the complexity of that space — text-to-video with speech, image-to-video with lip sync, audio-reactive video generation, and many more.

There's a Python query script included that demonstrates filtering by output modality, operation type, characteristics, and maturity level. The repo also includes a JSON schema for validation so contributors can add new modalities consistently.

Open for contributions

This started as a personal reference but I think it could be genuinely useful for the community. If you work with multimodal AI and find the current platform filtering inadequate, take a look at the GitHub repo and consider contributing new modality definitions or updating the metadata.